Kubernetes 101

An Introduction to the Powerful Container Orchestration Tool

I am a Linux enthusiast with over 20 years of experience in the open-source community. I am passionate about helping others learn more about Linux and open-source software, and have written numerous tutorials on topics such as system administration, and scripting. In my spare time, I enjoy playing retro video games and tinkering with new technologies.

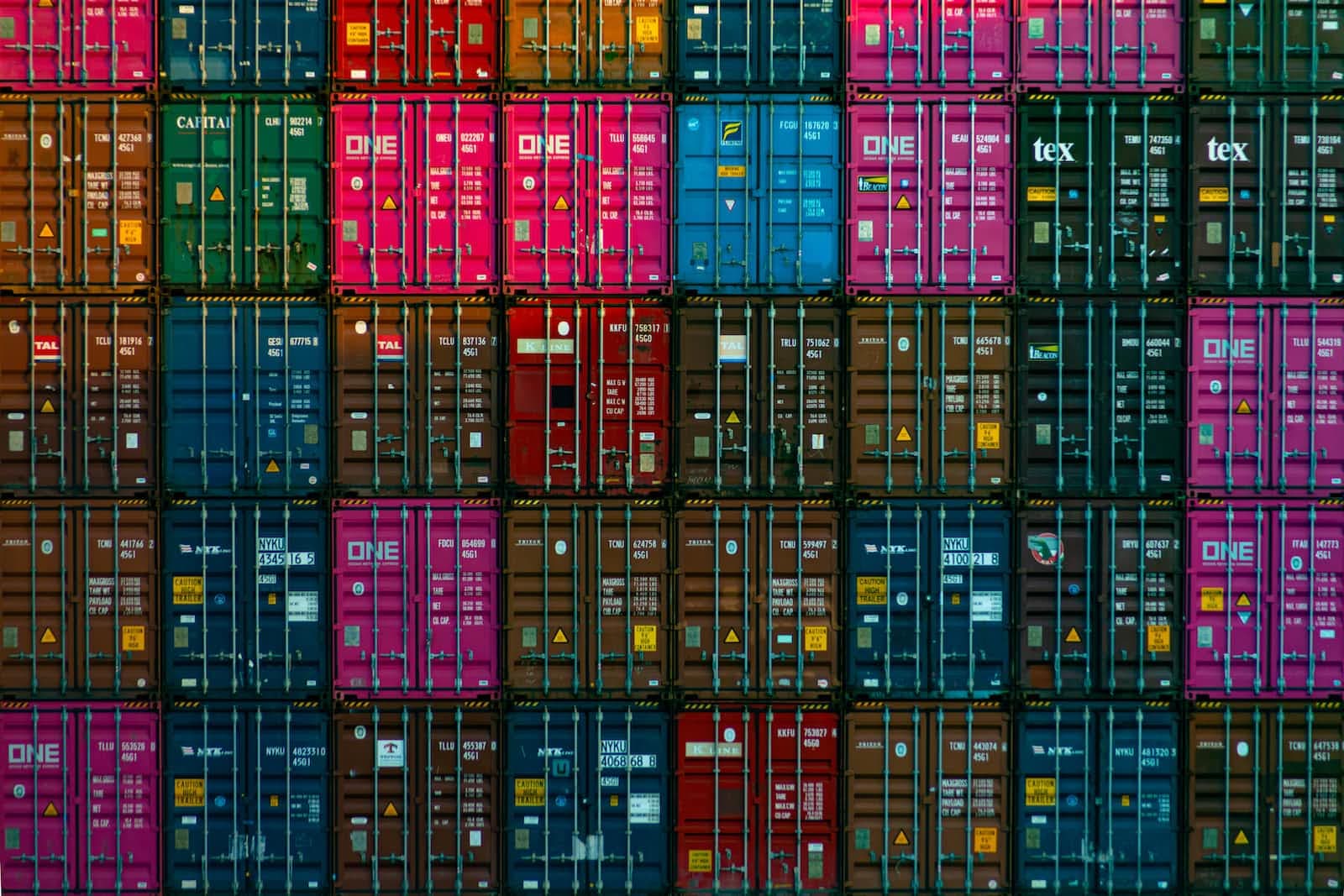

Overview of Kubernetes

Kubernetes is a powerful open-source container orchestration platform that enables users to deploy, manage and scale applications in the cloud. It automates many of the manual processes involved in deploying and managing distributed applications, allowing developers to focus on building their applications instead of worrying about infrastructure. Kubernetes provides an easy way for teams to deploy and manage complex microservices architectures quickly and efficiently. With its wide range of features such as deployment automation, resource scheduling, self-healing capabilities, service discovery, rolling updates and more - Kubernetes makes it easier than ever before for organizations to adopt modern application architectures without sacrificing scalability or reliability.

Benefits of using Kubernetes

Kubernetes provides organizations with several benefits and advantages. By utilizing Kubernetes, companies can easily scale their applications up or down as needed to meet customer demand, allowing them to respond quickly when faced with sudden changes in the market. Additionally, Kubernetes automates the process of rolling out updates and ensures they are running smoothly in production environments, so teams can deploy new features rapidly and reliably. Furthermore, leveraging containers instead of traditional virtual machines for application deployment helps businesses save on costs associated with infrastructure management while still delivering reliable performance. Ultimately, these benefits make it possible for businesses to better serve their customers and maximize profits over time by taking advantage of improved scalability, increased efficiency and cost savings provided by Kubernetes.

Deploying Applications with Kubernetes

Kubernetes helps you deploy and manage applications in a distributed architecture. It enables users to easily create, update, scale, and monitor their applications on any cloud platform or on-premise environment. With Kubernetes, developers can quickly build complex systems by using the same tools for development and deployment across multiple environments. The system also allows for automated rollouts of new versions of your application as well as rolling back deployments if something goes wrong. Furthermore, it provides a self-healing capability so that containers are automatically restarted when they fail or become unhealthy due to resource constraints or configuration issues. This makes deploying applications with Kubernetes highly reliable and efficient for businesses looking to maximize uptime without sacrificing performance or scalability.

Scaling and Orchestration with Kubernetes

Kubernetes allows developers to easily manage, deploy, and scale applications in the cloud. It provides an efficient way to automate the deployment of containerized applications across multiple nodes in a cluster. With Kubernetes, users can define how their application should be deployed and managed on a large scale with just minimal effort. Furthermore, it also enables users to quickly roll out updates and changes without having to worry about manual configuration or redeployment of services. Additionally, it helps improve resource utilization by allowing for dynamic scaling up or down based on demand as well as providing fault-tolerance through its built-in redundancy features. In short, Kubernetes makes it easy for developers to manage complex deployments while taking advantage of the scalability and resiliency benefits offered by cloud computing platforms like AWS or GCP.

Security Best Practices for Kubernetes

Kubernetes is a powerful and versatile container orchestration platform, but it can also be vulnerable to security risks if not managed properly. To ensure the safety of your Kubernetes environment, it is important to follow best practices when setting up and managing your clusters. Some key security best practices for Kubernetes include using role-based access control (RBAC) to manage user permissions, implementing network policies to limit communication between nodes in a cluster, encrypting sensitive data at rest and in transit, regularly scanning images for vulnerabilities before deploying them into production environments, enabling audit logging to track changes made within the system, and running regular vulnerability scans on the entire cluster. By following these steps you will help ensure that your Kubernetes environment is secure from potential threats.

Monitoring and Logging with Kubernetes

Kubernetes provides a variety of tools to help you monitor and log the performance of your applications. From built-in metrics, logs, and health checks to custom monitoring solutions like Prometheus or Elasticsearch, you can use these resources to gain visibility into how your application is performing in production. With proper logging enabled, it’s also possible to quickly identify any issues that arise so they can be addressed as soon as possible. Additionally, Kubernetes makes it easy to troubleshoot problems by providing detailed information about each container within an application deployment. By taking advantage of all the features available from Kubernetes for monitoring and logging purposes, you can ensure that your applications are running optimally and reliably at all times.

Continuous Integration and Delivery (CI/CD) in Kubernetes

Continuous Integration and Delivery (CI/CD) in Kubernetes is a powerful tool for streamlining the development process, allowing developers to quickly and easily deploy applications on the cloud. With CI/CD, teams can automate their processes for building, testing, and deploying code changes into production environments with minimal effort. This automation helps to reduce errors caused by manual configuration or deployment mistakes while also increasing efficiency as it eliminates time spent manually configuring and deploying applications. Additionally, CI/CD pipelines allow teams to monitor the progress of their deployments in real-time so that they can be alerted if any issues arise during the process. By utilizing CI/CD pipelines within Kubernetes clusters, organizations can improve their productivity while reducing costs associated with manual configuration and deployment processes

Networking in a Kubernetes Cluster

Networking in a Kubernetes Cluster is an essential component of managing the cluster's workloads and services. It enables the communication between pods, nodes, and clusters, as well as provides external access to applications running inside the cluster. The networking layer consists of multiple components such as network plugins, service proxies, load balancers, ingress controllers etc., which work together to provide secure communication between different parts of your system. In addition to this, it also provides isolation for namespaces within the cluster so that each application or service can have its dedicated network segment with no overlap from other applications or services. To ensure high availability and scalability for your Kubernetes deployment you need to carefully plan out how you will configure your networking layer to meet all of your requirements.

Optimizing Performance in a Kubernetes Environment

Optimizing performance in a Kubernetes environment is essential for ensuring that applications and services run smoothly and efficiently. Properly configured, Kubernetes can provide high availability, scalability, and fault tolerance to your applications. To optimize the performance of your workloads running on Kubernetes, there are several best practices to keep in mind such as selecting the right resource requests for each container; monitoring CPU utilization; setting up autoscaling based on memory or CPU usage; configuring liveness probes; using horizontal pod autoscalers (HPAs) to scale out pods when needed; enabling log collection for troubleshooting purposes; and leveraging caching strategies like Redis or Memcached whenever possible. By following these best practices you will be able to ensure optimal performance from your application running within a Kubernetes cluster.

Troubleshooting Common Issues in Kubernetes

Kubernetes is an incredibly powerful platform for deploying and managing applications, but as with any system, there can be issues that arise from time to time. Thankfully, troubleshooting common problems in Kubernetes doesn't have to be a daunting task; by understanding the fundamentals of how your application works and knowing where to look for errors, you can quickly identify and resolve issues before they become major roadblocks. Some of the most common issues seen when running applications on Kubernetes include: deployment failures due to incorrect configurations or resource limits; pods crashing unexpectedly; nodes becoming unresponsive or going offline; network connectivity problems between services; and slow performance due to inadequate resources or misconfigured settings. By taking the time to understand these potential pitfalls ahead of time, you can save yourself considerable effort down the line when it comes to resolving unexpected problems with your deployments.

Conclusion

Kubernetes is a comprehensive container orchestration system that provides a platform for deploying, scaling, and managing containerized applications. It provides a wide range of features, including container orchestration, self-healing, service discovery and load balancing, automatic rollouts and rollbacks, config management, secrets management, and monitoring and logging. Kubernetes is used by a wide range of organizations for microservices, cloud-native applications, CI/CD, big data, and IoT.